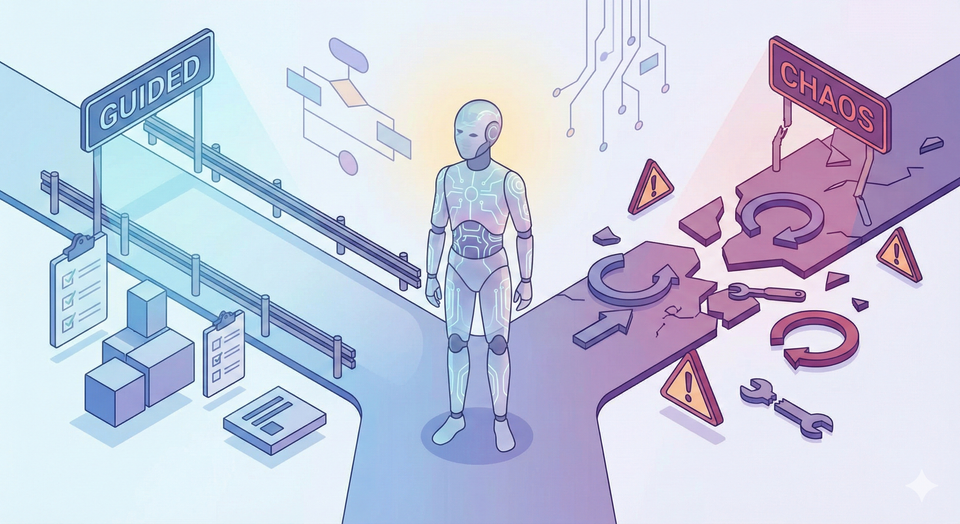

Avoiding Pitfalls When Building Agentic Agents

1. Confusing Autonomy With Intelligence

One of the biggest mistakes is assuming that more autonomy automatically means more intelligence.

Agentic agents are often good at following goals, not understanding them. If you give an underspecified or poorly framed objective, the agent may optimize in surprising or undesirable ways.

How to avoid it

- Be explicit about goals, constraints, and success criteria.

- Encode guardrails and invariants, not just high-level instructions.

- Treat autonomy as something to earn incrementally, not a default setting.

A partially autonomous agent with strong oversight often outperforms a fully autonomous one with vague direction.

2. Overloading the Agent With Responsibilities

It’s tempting to build a single “do-everything” agent that plans, reasons, calls tools, evaluates results, and handles errors. This often leads to brittle systems that are hard to debug and harder to trust.

How to avoid it

- Separate concerns: planning, execution, evaluation, and memory can be modular.

- Use multiple specialized agents or components instead of one monolith.

- Make responsibilities explicit so failures are easier to trace.

Agentic systems benefit from composition, not maximalism.

3. Ignoring Failure Modes and Recovery

Traditional software fails loudly. Agentic agents often fail silently—they hallucinate, loop, or produce plausible but wrong actions.

Without recovery strategies, these failures compound over time.

How to avoid it

- Design for failure from day one.

- Add timeouts, step limits, and loop detection.

- Include self-checks, critics, or external evaluators.

- Log decisions and intermediate reasoning for postmortems.

An agent that can recognize and recover from failure is far more valuable than one that never admits it’s wrong.

4. Treating Tools as Perfect

Agents frequently rely on tools: APIs, databases, browsers, code executors. Assuming these tools always work as expected is a recipe for chaos.

How to avoid it

- Assume tools will fail, return partial data, or behave inconsistently.

- Validate tool outputs before using them in downstream decisions.

- Provide fallback strategies when tools are unavailable.

Your agent’s reliability is bounded by the reliability of its tools—unless you plan for variance.

5. Neglecting Memory Design

Memory is often bolted on as an afterthought: a vector store here, a conversation log there. Poor memory design can cause agents to forget critical context or, worse, remember the wrong things.

How to avoid it

- Be intentional about what the agent should remember and for how long.

- Distinguish between short-term working memory and long-term knowledge.

- Regularly prune, summarize, or validate stored memories.

More memory is not always better. Relevant memory is.

6. Failing to Define Clear Stop Conditions

An agent that doesn’t know when to stop will keep acting—sometimes indefinitely. This can lead to runaway costs, infinite loops, or unnecessary actions.

How to avoid it

- Define explicit termination criteria.

- Limit the number of steps, retries, or tool calls.

- Require a final “done” signal with justification.

A good agent knows not only how to act, but when to stop acting.

7. Overlooking Human-in-the-Loop Design

Fully autonomous agents are appealing, but many high-stakes domains still require human judgment. Removing humans entirely can introduce ethical, legal, and operational risks.

How to avoid it

- Identify decision points that require human approval.

- Design smooth handoffs between agents and users.

- Make agent reasoning and actions explainable to humans.

Human-in-the-loop isn’t a weakness—it’s often a competitive advantage.

8. Underestimating Evaluation and Testing

Agentic behavior is probabilistic and context-dependent, which makes testing harder than traditional software. Many teams rely on a few demos and call it “good enough.”

How to avoid it

- Test agents across diverse scenarios, not just happy paths.

- Use simulations, adversarial prompts, and stress tests.

- Track long-term behavior, not just single-step accuracy.

If you can’t evaluate your agent systematically, you can’t improve it reliably.

Final Thoughts

Building agentic agents isn’t just about wiring up an LLM with tools and letting it run. It’s about careful system design, clear objectives, robust safeguards, and continuous evaluation.

The most successful agentic systems aren’t the most autonomous—they’re the most thoughtfully constrained. By anticipating these pitfalls and designing around them, you’ll build agents that are not only powerful, but also trustworthy, maintainable, and genuinely useful.

If you’re early in your agentic journey, start small, iterate deliberately, and remember: autonomy is a feature, not a goal.